Construction of Elon Musk’s supercomputer facility is nearly complete, with installation expected to begin in the next few months.

In May, The Information reported that the American billionaire and his company xAI are planning to mobilize and connect 100,000 specialized graphics cards into a supercomputer called the ” Gigafactory of Computing “, expected to be operational by fall next year.

According to Tom’s Hardware , Musk is working hard to get the system completed on schedule. He chose Supermicro to provide cooling solutions for both the data centers that house Tesla’s supercomputer and xAI.

“Thank you Musk for taking the lead in choosing liquid cooling technology for giant AI data centers. This move could help preserve 20 billion trees for the planet,” Charles Liang, founder and CEO of Supermicro , wrote on X on July 2.

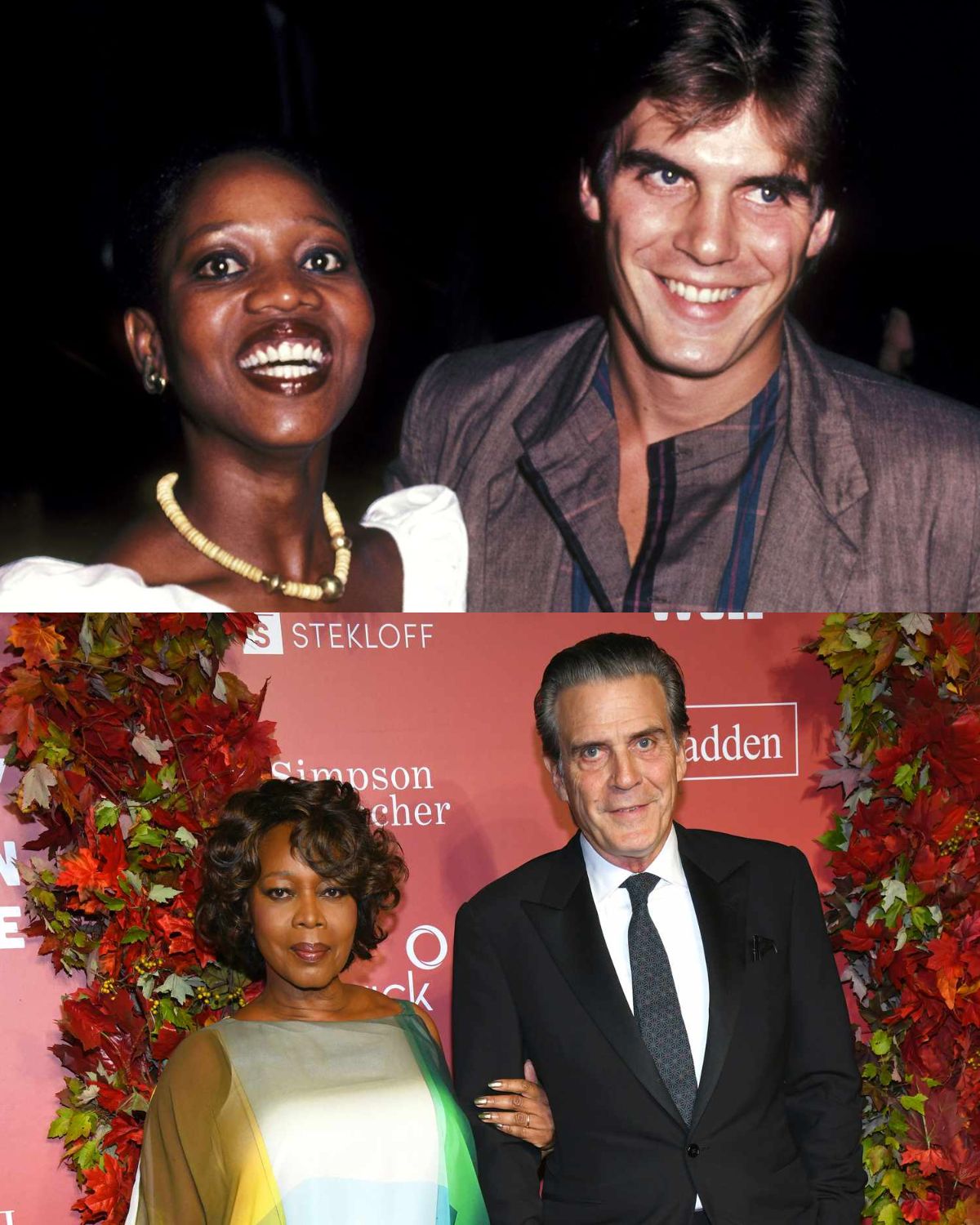

Charles Liang shares a photo with Elon Musk at a data center. Photo: X

AI data centers are notoriously power-hungry, and Supermicro hopes its liquid solution will reduce infrastructure electricity costs by up to 89 percent compared to traditional air-cooled solutions.

In a previous tweet, Musk estimated that the Gigafactory supercomputer would consume 130 megawatts (130 million watts) of electricity when deployed. Combined with Tesla’s AI hardware once installed, the factory’s power consumption could reach 500 megawatts. The billionaire said construction of the facility is almost complete, with installation of machinery expected to begin in the coming months.

Musk is building two supercomputer clusters in parallel for Tesla and the xAI startup, both estimated to be worth billions of dollars. According to Reuters , if completed, the Gigafactory of Computing will be the world’s most powerful supercomputer, four times more powerful than the largest GPU cluster today.

xAI was founded by Elon Musk in July last year, competing directly with OpenAI and Microsoft. The startup later launched the Grok AI model, which competes with ChatGPT. Musk said earlier this year that training the next Grok 2 model would require about 20,000 Nvidia H100 GPUs, while Grok 3 could require 100,000 H100 chips.